Release 5.5.1

Copyright © 2022 DF/Net Research, Inc.

All rights reserved. No part of this publication may be re-transmitted in any form or by any means, electronic, mechanical, photocopying, recording, or otherwise, without the prior written permission of DF/Net Research, Inc. Permission is granted for internal re-distribution of this publication by the license holder and their employees for internal use only, provided that the copyright notices and this permission notice appear in all copies.

The information in this document is furnished for informational use only and is subject to change without notice. DF/Net Research, Inc. assumes no responsibility or liability for any errors or inaccuracies in this document or for any omissions from it.

All products or services mentioned in this document are covered by the trademarks, service marks, or product names as designated by the companies who market those products.

Google Play and the Google Play logo are trademarks of Google LLC. Android is a trademark of Google LLC.

App Store is a trademark of Apple Inc.

Oct 03, 2022

Table of Contents

- Preface

- 1. Introduction

- 2. DFdiscover Study Files

- 3. Shell Level Programs

- 3.1. Introduction

- 3.2. User Credentials

- 3.3. Organization of Reference Pages

- 3.4. Alphabetical Listing

- DFaccess.rpc — Change access to a study database or , or query their current access status.

- DFattach — Attach one or more external documents to keys in a DFdiscover study

- DFaudittrace — Used by the DF_ATmods report to read study journal files. DF_ATmods produces an audit trail report showing database modifications for the specified study.

- DFbatch — Process one or more batch edit check files

- DFcompiler — Compile study-level edit check programs and output any warnings and/or errors encountered in the syntax.

- DFdisable.rpc — Disable a study database server or incoming fax daemon to make them unavailable to clients and incoming faxes

- DFenable.rpc — Enable a study database server or incoming fax daemon following a previous

- DFencryptpdf — Protect a PDF file by encrypting it with the specified password

- DFexport — Client-side, command-line interface for exporting data by plate, field or module; exporting change history; or exporting components of study definition

- DFexport.rpc — Export data records from one or multiple plates from a study data file

- DFfaxq — Display the members of the outgoing fax queue

- DFfaxrm — Remove faxes from the outgoing fax queue

- DFget — Get specified data fields from each record in an input file and write them to an output file

- DFgetparam.rpc — Retrieve and evaluate the value of the requested configuration parameter

- DFhostid — Display the unique DFdiscover host identifier of the system

- DFimageio — Request a study CRF image from the database

- DFimport.rpc — Import database records to a study database from an ASCII text file

- DFlistplates.rpc — List all plate numbers used in the study

- DFlogger — Re-route error messages from non-DFdiscover applications to

syslog, which in turn writes the messages to the system log files, as configured in/etc/syslog.conf. - DFpass — Locally manage user credentials for client-side command-line programs.

- DFpdf — Generate bookmarked PDF documents of CRF images

- DFpdfpkg — Generate multiple bookmarked PDF files for specified subject IDs or sites

- DFprint_filter — Format input file(s) for printing to a PostScript® capable printer, and print to a specified printer

- DFprintdb — Print case report forms merged with data records from the study database

- DFpsprint — Convert one or more input CRF images into PostScript®

- DFqcps — Convert a Query Report, previously generated by DF_QCreports, into a PDF file with barcoding, prior to sending the report to a study site

- DFreport — Client-side, command-line interface for executing reports

- DFsas — Prepare data set(s) and job file for processing by SAS®.

- DFsendfax — Fax or email a plain text, PDF, or TIFF file to one or more recipients

- DFsqlload — Create table definitions and import all data into a relational database

- DFstatus — Display database status information in plain text format

- DFtextps — Convert one or more input files into PDF

- DFuserdb — Perform maintenance operations on the user database

- DFversion — Display version information for all DFdiscover executables (programs), reports, and utilities

- DFwhich — Display version information for one or more DFdiscover programs, reports and/or utilities

- 4. Utility Programs

- 4.1. Introduction

- 4.2. Alphabetical Listing

- DFaddHylaClient — Create the symbolic links necessary for accessing HylaFAX on a DFdiscover server

- DFauditdb — Add or reload journal files to DFaudit.db sqlite database.

- DFcertReq — Request an SSL certificate signing for DFedcservice.

- DFclearIncoming — Clean out the fax receiving directory, processing all newly arrived faxes

- DFcmpSchema — Apply the data dictionary rules against the study database

- DFcmpSeq — Determine the appropriate values for each

.seqYYWWfile - DFisRunning — Determine if the DFdiscover master program is currently running on the licensed DFdiscover machine

- DFmigrate — Upgrade study setup and configuration files from an old DFdiscover version to the current version.

- DFras2png — Convert Sun raster files in the study pages directory into PNG files

- DFshowIdx — Show the per plate index file(s) for a specific study

- DFstudyDiag — Report (diagnose) the current status of a study database server

- DFstudyPerms — Report, and correct, the permissions on all required DFdiscover sub-directories and files for a study

- DFtiff2ras — Convert a TIFF file into individual PNG files

- DFuserPerms — Import and update users and passwords, and optionally import roles, role permissions, and user roles

- 5. Edit checks

- 5.1. Introduction

- 5.2. Language Features

- 5.3. Database Permissions

- 5.4. Language Structure

- 5.5. Variables

- 5.6. Missing/Blank Data

- 5.7. Arithmetic Operators

- 5.8. Conditional Execution

- 5.9. Built-in Functions and Statements

- 5.9.1. Edit check Function Compatibility Notes

- 5.9.2.

dfaccess - 5.9.3.

dfaccessinfo - 5.9.4.

dfalias2id - 5.9.5.

dfask - 5.9.6.

dfbatch - 5.9.7.

dfcapture - 5.9.8.

dfcenter - 5.9.9.

dfclosestudy - 5.9.10.

dfdate2str - 5.9.11.

dfday - 5.9.12.

dfdirection - 5.9.13.

dfentrypoint - 5.9.14.

dfexecute - 5.9.15.

dfgetfield - 5.9.16.

dfgetlevel/dflevel - 5.9.17.

dfgetseq - 5.9.18.

dfhelp - 5.9.19.

dfid2alias - 5.9.20.

dfillegal - 5.9.21.

dfimageinfo - 5.9.22.

dflegal - 5.9.23.

dflength - 5.9.24.

dflogout - 5.9.25.

dfmail - 5.9.26.

dfmatch - 5.9.27.

dfmessage/dfdisplay/dferror/dfwarning - 5.9.28.

dfmetastatus - 5.9.29.

dfmode - 5.9.30.

dfmoduleinfo - 5.9.31.

dfmonth - 5.9.32.

dfmoveto - 5.9.33.

dfneed - 5.9.34.

dfpageinfo - 5.9.35.

dfpassword - 5.9.36.

dfpasswdx - 5.9.37.

dfplateinfo - 5.9.38.

dfpref - 5.9.39.

dfprefinfo - 5.9.40.

dfprotocol - 5.9.41.

dfrole - 5.9.42.

dfsiteinfo - 5.9.43.

sqrt - 5.9.44.

dfstay - 5.9.45.

dfstr2date - 5.9.46.

dfstudyinfo - 5.9.47.

dfsubstr - 5.9.48.

dftask - 5.9.49.

dftime - 5.9.50.

dftoday - 5.9.51.

dftool - 5.9.52.

dftrigger - 5.9.53.

dfuserinfo - 5.9.54.

dfvarinfo - 5.9.55.

dfvarname - 5.9.56.

dfview - 5.9.57.

dfvisitinfo - 5.9.58.

dfwhoami - 5.9.59.

dfyear - 5.9.60.

int

- 5.10. Query operations

- 5.11. Reason operations

- 5.12. Lookup Tables

- 5.13. Looping

- 5.14. User-Defined Functions

- 5.15. Examples and Advice

- 5.16. Optimizing Edit checks

- 5.17. Creating generic edit checks

- 5.18. Debugging and Testing

- 5.19. Language Reference

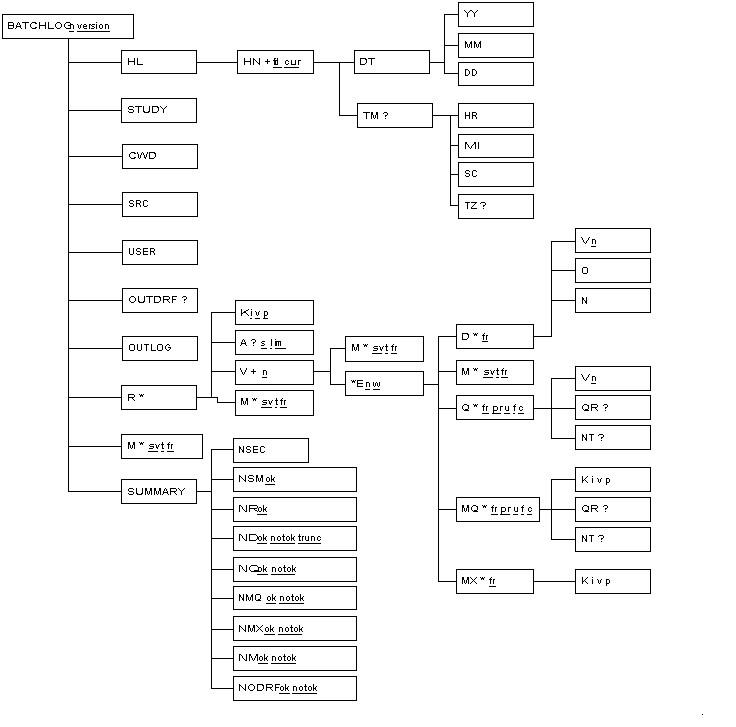

- 6. Batch Edit checks

- 7. Writing Your Own Reports

- 8. DFsas: DFdiscover to SAS®

- 9. DFsqlload: DFdiscover to Relational Database Tables

- A. Copyrights - Acknowledgments

- A.1. External Software Copyrights

- A.1.1. DCMTK software package

- A.1.2. Jansson License

- A.1.3. Mimencode

- A.1.4. RSA Data Security, Inc., MD5 message-digest algorithm

- A.1.5. mpack/munpack

- A.1.6. TIFF

- A.1.7. PostgreSQL

- A.1.8. OpenSSL License

- A.1.9. Original SSLeay License

- A.1.10. gawk

- A.1.11. Ghostscript

- A.1.12. MariaDB and FreeTDS

- A.1.13. QtAV

- A.1.14. FFmpeg

- A.1.15. c3.js

- A.1.16. d3.js

Table of Contents

For software support, please contact the DFdiscover team:

via email,

<help@dfnetresearch.com>;visit our website, www.dfnetresearch.com.

A number of conventions have been used throughout this document.

Any freestanding sections of code are generally shown like this:

# this is example code code = code + overhead;

If a line starts with # or %, this

character denotes the system prompt and is not typed by the user.

Text may also have several styles:

Emphasized words are shown as follows: emphasized words.

Filenames appear in the text like so:

dummy.c.Code, constants, and literals in the text appear like so:

main.Variable names appear in the text like so:

nBytes.Text on user interface labels or menus is shown as: Printer name, while buttons in user interfaces are shown as .

Menus and menu items are shown as: > .

Table of Contents

This guide is for programmers, and for those who aspire to be. It covers DFdiscover file formats and those tools that are executed at the shell level. As a result, some familiarity with the UNIX operating system and UNIX shell level programming is assumed.

If this is your first encounter with UNIX we strongly recommend that you look for a good UNIX programming book in your local bookstore. We would not hesitate to recommend The UNIX Programming Environment by Kernighan and Pike, and The Awk Programming Language by Aho, Kernighan and Weinberger. The former is an excellent introduction to writing UNIX shell scripts, while the latter describes awk, a simple C-like language which is ideal for manipulating data files. All of the tools described in these 2 books come as standard components of the UNIX operating system.

In the remaining chapters of this guide we will describe:

DFdiscover Study Files. A description of the location and format of all study files (including the configuration and data files) used in all DFdiscover studies

Shell Level Programs. Over a dozen shell level commands for doing such things as:

exporting data records from a study database

selecting individual data fields

reformatting exported data records

importing data records from other sources into a DFdiscover study database

sending and managing faxes

getting study configuration parameters

These programs can be used in shell scripts to create your own programs. When combined with standard UNIX commands (like awk, sed, grep, etc.) they provide an extremely powerful and flexible way of creating study report programs.

Utility Programs. Descriptions of several infrequently used, yet useful, utility programs that can simplify some DFdiscover management tasks.

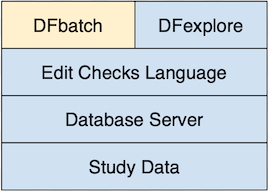

Edit checks. A programming language for writing and executing edit checks that occur in real-time during data validation.

Batch Edit checks. An extension of the edit checks language that permits execution of edit checks in batch, non-interactive mode.

DFsas: DFdiscover to SAS®. An environment for generating SAS® job and data files from a DFdiscover study database and study schema.

DFsqlload: DFdiscover to Relational Database Tables. A program that creates relational database tables from a DFdiscover study database and study schema.

The following cribsheet is for DFdiscover programmers and summarizes all relevant DFdiscover database limits and formats. This cribsheet is to be used in conjunction with the information that follows in this chapter.

| Description | Limit | Comments |

|---|---|---|

| DFdiscover Study Number |

1-999

| The suggested range for study numbers is 1-249 as study numbers of 250-255 are reserved for DFdiscover test and validation studies (e.g. ATK = 254). With the appropriate software license, study numbers 256-999 are available for defining EDC studies. |

| Plate Number | 0-501, 510, 511 | Plates 501 and 511 are reserved by DFdiscover for Query Reports and can not be re-defined at the user level. Plate 0 references the new record queue. Plate 510 is reserved by DFdiscover for Reason records. |

| Visit/Sequence Number (barcoded) | 0-511 | |

| Visit/Sequence Number (first data field) |

0-65535

| Any data field representing the visit/sequence number must be defined in the database as field #6 using DFdiscover schema numbering. |

| Site Number |

0-21460

| This limit applies to the site number only. A subject identifier is concatenated to the site number to obtain the subject ID. |

| Subject ID Number |

0-281474976710655

| For subject IDs that are composed of site # + ID #, this limit applies to the concatenated value of the two. This field could contain 15 digits at maximum. |

| Any numeric value |

-2147483647-2147483647

| Any numeric field, except the subject ID field, can contain 10 digits at maximum, which include any leading sign and decimal point. This limit applies to the following DFdiscover field types, which have a base numeric value: numeric, visual analog scale (VAS), choice codes, check codes. |

| Query Use | 0 = none 1 = external 2 = internal | |

| Query Type | 0 = none 1 = Q&A (clarification) 2 = refax (correction) | |

| Query Category Code |

1=missing, 2=illegal, 3=inconsistent, 4=illegible, 5=fax noise, 6=other, 21=missing page, 22=overdue visit, 23=edit check missing page, 30-99=user-defined problem type

| |

| Query Status |

0=pending review, 1=new, 2=in unsent report, 3=resolved, NA, 4=resolved, irrelevant, 5=resolved, corrected, 6=in sent report

| |

| Query Detail Field | max 500 characters | |

| Query Note Field | max 500 characters | |

| Missed Data Log Explanation Field | max 500 characters | |

| Default Date Format | YY/MM/DD | |

| Validation Level (system) | 0-7 | Level 0 represents new, not yet entered, records |

| Validation Level (user) | 1-7 | A user cannot assign a validation level of 0 to a data record. |

| Maximum Data Record Length | 16384 ASCII (4096 UNICODE) characters | This is the maximum length that the system can accept and includes 55 characters of overheard maintained by the system. Therefore, the length of data record available for user-defined fields is 55 characters less. |

| Maximum DFsas Record Length | 2048 characters | DFsas is unable to process input files for SAS® greater than this size. |

Table of Contents

This chapter describes the files maintained under each DFdiscover study directory. It starts with a brief overview of the top-level directories and then describes the files kept under each directory in detail. A similar chapter, System Administrator Guide, DFdiscover System Files, describes the files located under a DFdiscover installation directory.

The following directories are part of a standard DFdiscover study definition.

bkgd. This directory holds CRF background images created by DFsetup. Three files are created for each study plate. These include the plate background used by DFsetup (plt###), the data entry screen used by DFexplore (DFbkgd###), and a background used when printing CRFs containing database values from DFprintdb or DFexplore (DFbkgd###.png).![[Note]](../../imagedata/note.png)

Note This directory must not be used to store any other files. Extraneous files will be deleted when CRF images are published from a development study to its production study, or when reverting a development study to the current state of its production study.

data. This directory holds the study data files, queries data file, and journal files. The following files are described in detail later in this chapter in The studydatadirectory:plt###.dat- per plate data files. the study data files are created from the data recorded on the study CRFsDFqc.dat- the Query database. the quality control data file contains all field level queries added to flag CRF problems and request data clarifications from investigatorsDFreason.dat- reason for change records. the reason for change data file contains all field level reasons for data changes clarifying, if required, why a field's data value was changedDFin.dat- the new records database. the new record queue contains all records that have been received and ICRed by the DFdiscover software but not yet validatedplt###.dat- missed records. missed records serve as placeholders in data files indicating that requested data records will never be availableplt###.ndx- per plate index files. per plate index files that speed database searching and hold record lock informationYYMM.jnl- monthly database journal files. the journal files record all database transactions and provide an audit trail of additions and modificationsDFaudit.dbsqlite audit trail. sqlite version of the journal files

dfsas. This directory contains any stored SAS® jobs that were created from DFexplore.dfschema. This directory contains any stored DFschema files which are used to track changes to the study setup. These are used by DFaudittrace to generate records for DF_ATmods.drf. This directory contains any .drf files (DFdiscover Retrieval Files) created by server-side tools, including reports and the program DFmkdrf.jnl. The common .drf files created by DFdiscover reports include:-

DupKeys.drf. Lists any duplicate primary keys found in the database by the last execution of DF_XXkeys. -

VDillegal.drf. Lists any illegal visit dates found in the database by the last execution of DF_XXkeys. -

VDincon.drf. Lists any inconsistent visit dates found in the database by the last execution of DF_XXkeys. -

DFunexpected.drf. Lists any unexpected data records found in the database by the last execution of DF_QCupdate.

-

ecbin. Any study specific scripts or programs that are run by edit check functiondfexecute()and any Plate Arrival Trigger scripts defined in the DFsetup Plates View, must be stored in the study levelecbindirectory. Executables can also be stored in the DFdiscover levelecbindirectory for use in all studies. The study levelecbindirectory has priority if the same program or script is stored in both locations.ecsrc. This directory contains the edit check files:DFeditsand any other edit check source files referenced in 'include' statements. Include files can also be stored in the DFdiscover levelecsrcdirectory. The study levelecsrcdirectory has priority if the same include file is stored in both locations.lib. All study configuration files, created through both the DFadmin and DFsetup tools, are located in this directory. The following files are described in detail later in this chapter in The studylibdirectory:DFccycle_map- conditional cycle map . Defines cycles that may be required, unexpected or optional, depending on specified database conditions (optional).DFcenters- sites database . Study sites database includes information on all clinical sites participating in the study.DFcplate_map- conditional plate map . Defines plates that may be required, unexpected or optional, depending on specified database conditions (optional).DFcterm_map- conditional termination map . Defines database conditions that signal termination of subject follow-up (optional).DFcvisit_map- conditional visit map . Defines visits that may be required, unexpected or optional, depending on specified database conditions (optional).DFCRFType_map- CRF type map . Defines categories for different CRF background types (e.g. translations) (optional).DFcrfbkgd_map- CRF background map . Defines visits with CRF backgrounds used across multiple visits (optional).DFedits.bin- published edit checks . The "published" equivalent of the edit checks - for use by DFexplore clients only (optional).DFfile_map- file map . Specifies each unique CRF plate used in the study.DFlut_map- lookup table map . Defines and associates lookup table names with directory names of lookup tables. Also includes definition for query lookup table, if it exists (optional).DFmissing_map- missing value map . Missing value codes (and labels) used in the study (optional).DFpage_map- page map . Used to specify descriptive labels to replace the visit/sequence and plate numbers that are used by default to identify problems on the Query Reports (optional).DFqcsort- Query and CRF sort order . Specifies a sort order for queries as written on Query Reports. The default is to sort by field number within plate number within visit/sequence number within subject ID (optional)..DFreports.dat- reports history . Personal file of DFdiscover report commands (and options) corresponding to reports which the user runs in the reports tool (optional).DFschema- database schema . Study database schema (or dictionary) describes all variables on all plates, as specified in the setup tool.DFschema.stl- database schema styles . Study database schema (or dictionary) describes all variable styles defined for the study in the setup tool.DFserver.cf- server configuration . Configuration file for the study database server.DFsetup- study definition . This is a JSON format file maintained and used by DFsetup to record all study setup information, including all plate, style and variable definitions.DFsubjectalias_map- subject alias map . Used to specify descriptive labels to optionally replace the numeric subject ID used as an identifier throughout DataFax.DFsubjectalias_map.log- subject alias change log . Log all changes to the mapping of subject ID to subject alias over the course of a study.DFtips- ICR tips . This file contains the coordinates, field type and legal values for all variables defined on all plates. It is used by the ICR software to create the initial data record from new CRF pages that arrive by fax.DFvisit_map- visit map . This file describes the scheduling of subject assessments during the trial and the CRF pages expected at each assessment.DFwatermark- watermark . This file describes watermarks used for printed output assigned by role.QCcovers- Query cover pages . This file contains formatting information to be included in a Query Report cover sheet. To include a cover sheet for a Query Report, QCcovers must first be defined.QCmessages- Query Report messages . This file contains messages to be included in Query Report cover sheets. More than one message may be included in a single Query Report, however, DF_QCreports expects the cover sheet information and all messages to fit on a single page.QCtitles- Query Report titles . This file describes how the report title and each title of the 3 sections of a DFdiscover Query Report are to be customized. DF_QCreports checks for the existence of this file and will use it if it exists, otherwise standard titles will be produced.DFqcproblem_map- Query category code map . This file contains all the system-defined and user-defined query category codes.DFreportstyle- DFdiscover Report styling . This file contains styling "instructions" for reports, excluding Legacy reports. Styling includes font choices and colors used in graphs and charts.

lut. This directory contains all study specific lookup tables. Lookup tables can also be stored in directory lut at the DFdiscover level. Study level files have priority if a lookup table with the same file name is stored in both locations. Lookup table file names must be associated with an edit check name inDFlut_map(found in the study/libdirectory) before they can be used in edit checks.-

pages. This directory holds all standard (SD) resolution (100 dpi) CRF image and supporting document files for all CRF pages received or uploaded during the study. Each CRF page received as a fax or PDF is stored as a PNG file (Sun Rasterfile in pre-2014 DFdiscover releases), and requires about 25K bytes of disk space (i.e. 40 CRF pages per MB). Supporting documents can be audio, video, DICOM or PDF files and will be in their native format.DFdiscover creates subdirectories, below the study pages directory, in which to store the CRF image files. These subdirectories are named by the year and week (in

yywwformat) in which the CRFs arrived. For example, a study which started on January 1, 1995 would have subdirectories named: 9501, 9502, 9503, etc. through to the end of the trial.Within each of the

yywwsubdirectories, the individual CRF page files are named by the concatenation of the sequence number of the fax (starting at 0001 each week) in which they arrived during that week, and their page number within the fax. The file-naming format isffffppp. For example, if the first fax to arrive in the week of 9501 contained 3 pages, it would be represented by the following files beneath the study pages directory:9501/0001001,9501/0001002, and9501/0001003. Theffffpart of the file name is a sequential value constructed from 4 characters, each character taken from the alphabet:0 1 2 3 4 5 6 7 8 9 B C D F G H J K L M N P Q R S T V W Y Z

This is essentially the digits 0 through 9 followed by the uppercase letters A through Z, with the exception of the letters A, E, I, O, U, and X. This part of the file name thus lies between 0001 and ZZZZ, which allows for a total of 30x30x30x30-1 = 809,999 faxes per week. Example file names within a week include:

00010055th page of 1st fax000B0011st page of 10th fax000Z01111th page of 29th fax00100022nd page of 30th fax01C00044th page of 1230th fax

-

pages_hd. This directory holds all higher (HD) resolution (300 dpi) CRF images and supporting document files received or uploaded during the study. DFdiscover creates subdirectories and stores the files in the same manner as thepagesdirectory. reports. It is recommended that all study specific reports, i.e. those programs which have been created specifically for a particular clinical trial, are stored in the study reports directory. This helps to standardize maintenance across studies.Documentation for study specific reports must also be stored in this directory in a file named

.info, which uses the same format used to document the standard DFdiscover reports. The specific requirements of this file and its format are described in Writing Documentation for Study Specific Reports.reports/QC. This directory is used to store the standard DFdiscover Query Reports, created by DF_QCreports. The files and directories maintained under this directory are briefly described below.ccc-yymmdd

The naming convention for Query Reports is a zero padded 3 digit site ID (if the site ID is greater than 999, it will be a 4 digit number), followed by a dash, and then the date on which the report was created. For example: 055-150815 would identify a Query Report created for site 55 on Aug 15, 2015. Thus you can tell from a Query Report name, both whom it was created for and when.

Although this is helpful it does have one disadvantage, namely, it means that you cannot create more than one Query Report per day for an individual clinical site. If you do, the earlier one will be over-written. However, since Query Reports are sent to the clinical sites, and this isn't something you should do more than about once a week, and certainly not more than once a day, this approach to naming Query Reports works.

Query Reports remain under

QCuntil they are sent to the clinical sites. Thus any reports that you see at this level have not been transmitted to the study clinics.QC/sentQuery Reports are moved to this directory after they have been successfully sent to their respective clinical sites.

QC/internalDF_QCreports is also able to create named, internal Query Reports, which can include subjects from more than one site. These reports are written directly to this directory.

QC_LOG

This is a plain ASCII file that lists any error messages reported during the last execution of DF_QCreports.

QC_NEWThis is a plain ASCII file that lists the Query Reports created by the most recent execution of DF_QCreports.

SENDFAX.log

This is a plain ASCII file in which DF_QCfax records the success or failure of its attempts to fax Query Reports to the clinical sites.

SENDFAX.qup

This is a plain ASCII file that lists all Query Reports which have been queued up for transmission to the clinical sites.

other files

Occasionally, DF_QCreports might be halted in mid-execution, by a power failure, or some other problem. In such cases it may not have time to remove the temporary files that it creates in the process of generating the Query Reports. These files generally contain a process ID#; for example you might see files that look like this

18273_N.refax,18273_N.qalist. These temporary files can, and should, be removed.work. The work directory contains temporary files, DFdiscover retrieval files and summary data files. It is important to be aware of the files that DFdiscover creates and maintains in this directory so that programmers do not inadvertently overwrite them.-

Temporary Files. The work directory is used for temporary files created by various reports and is the recommended location for temporary files created by study specific reports and for data files exported using DFexport.rpc. If DFexport.rpc is used to export study data files to this directory, or create temporary files here in the process of generating study specific reports, you need to be very careful in your selection of temporary file names, so that simultaneous execution of different programs does not result in temporary file name conflicts. A useful strategy is to include the process ID plus some part of the program name in all temporary file names, and also to check for and create a lock directory for any programs that should not be executed simultaneously by more than one user.

Because the work directory is used for temporary file creation by various DFdiscover programs, you might find temporary files that were not removed because a program failed to complete due to a power failure or some other problem. Any file that is several days old, and has what looks like a process id number as part of it's name, is probably a temporary file left over when a program failed to complete successfully. Such files can be removed. This should be done periodically to clean up the study work directory.

-

Summary Files. The work directory contains a number of summary data files created by DF_XXkeys and DF_QCupdate and used by other programs including DF_QCreports and DF_PTvisits. The following files are described in detail later in this chapter in The study

workdirectory:

-

The data, pages, and pages_hd

directories must be owned by user datafax, with read, write and execute

permissions for owner, and read and execute permissions for group.

The bkgd,

dde,

frame,

lib,

reports, and

work directories must have read, write

and execute permissions set for all users of the study group (typically this

will be UNIX group studies).

These permissions are set by DFadmin on those directories which it creates when the study is first registered. The utility program DFstudyPerms is available for diagnosing and correcting study permission problems. A detailed list of study files and directories checked by this program can be found at System Administrator Guide, Maintaining study filesystem permissions

Each file documented in this section is described with the following attributes:

Table 2.1. File attributes

| Heading | Description |

|---|---|

| Usual Name | the file name that is usually given to files having this format. Some files are kept at the DFdiscover directory level while others are kept separately with each study directory. |

| Type | one of: "clear text" or "binary". Clear text files can be reviewed with any text editor. |

| Created By | the name of the DFdiscover program(s) that create and modify this file. If you need to edit the contents of the file, use the program listed here. |

| Used By | the name of the DFdiscover program(s) that reference or read this file. |

| Field Delimiter | how fields within a record are delimited. Typically, the delimiter is a single character. |

| Record Delimiter | how records within the file are delimited. Typically, the delimiter is a single character. |

| Comment Delimiter | how comments within the file are delimited. If comments are not permitted within the file, "NA" is indicated. |

| Fields/Record |

the expected number of fields per record.

If the number of fields varies across records,

the minimum number is given, followed by a +.

|

| Description | a detailed description of the meaning of each field. |

| Example | one or more example records from the file. |

A DFdiscover Retrieval File (DRF) is an ASCII file in which each record identifies the subject ID, visit or sequence number and CRF plate number (in that order, | delimited) and optionally the image ID corresponding to a record in the study database.

DFdiscover retrieval files are created by DFexplore, DFdiscover reports, and the DFdiscover programs DFmkdrf.jnl and DFexport.rpc.

DFdiscover retrieval files created by DFexplore and DFdiscover reports and

programs reside in the study drf

directory. It is also recommended that DRFs created using DFexport.rpc also

be saved to the study drf

directory. All DRFs must end with the

.drf

extension. Exercise caution when selecting DRF names to avoid conflicts with

those DRF file names generated by DFdiscover applications.

Below is a description of

the DRF file format.

Table 2.2. Data Retrieval Files (DRF)

| Usual Name | filename.drf |

| Type | clear text |

| Created By | DFexport.rpc, DFmkdrf.jnl, DFexplore |

| Used By | DFexplore |

| Field Delimiter | | |

| Record Delimiter | \n |

| Comment Delimiter | # |

| Fields/Record | 3+ |

| Description |

Data records have the common fields and characteristics defined in Table 2.3, “DFdiscover Retrieval File field descriptions”. |

| Example |

This is an example of a DRF listing all primary records having one or

more queries attached to them. The DRF filename

99001|1|2 99001|1011|9 99002|1|2 99002|22|5 99002|1011|9 99004|1|2

|

Table 2.3. DFdiscover Retrieval File field descriptions

| Field # | Contains | Description |

|---|---|---|

| 1 | subject/case ID | this is a number in the range of 0-281474976710655 used to uniquely identify

each subject/case in the study database |

| 2 | visit/sequence number | a number in the range of 0-21460, that uniquely identifies each

occurrence of a visit number for a subject ID |

| 3 | plate number | a number in the range of 1-501 that uniquely identifies the plate to be included in the DRF. |

| 4 | image identifier | the value in this optional field is the unique identifier of the CRF image identified by the keys in the first 3 fields. This field is used to identify a specific instance of a CRF when there are one or more secondaries having the same key fields. |

| 5 | optional text | this field can be used to provide a short descriptive message to DFexplore users. The text is displayed in the message window at the bottom of DFexplore when the data record is selected in the DFexplore record list. |

The study data directory holds all study data files, including the plate files that correspond to CRF plates, the query data file and the database transaction journals. These files are managed by the study database server and should not be accessed directly. Instead the records required should be exported from the study database to another file, as described in DFexport.rpc, and then read from the exported file.

Data records should never be added to these database files without doing so through the study database server. The program DFimport.rpc is available and is the recommended method of sending new or revised data records to the study database server.

Occasionally you may need to make extensive changes to an entire database file. The typical scenario is a change to one or more of the study CRFs to add or remove fields. In this case the plate definitions originally entered in the setup tool also need to be changed to match the new structure of the corresponding data files. This is a major operation. It requires that the study server be disabled while the database and setup definitions are being changed, and that the effected data files be re-indexed before the study database server is re-enabled.

The following sections describe data and index files ending with

.dat and .idx suffixes.

Very rarely, you may notice files having the same name but with

.tad or .xdi suffixes.

These files are temporary files created and managed by the database

server.

They are present while the server performs sorting and garbage collection

on a particular data file.

As such they should be treated in the same manner as the other data and index

files - basically, do not touch them.

Typically, this is not a problem because the files are present for only a

second or two.

Table 2.4. plt###.dat - per plate data files

| Usual Name | plt###.dat | |||

| Type | clear text | |||

| Created By | DFserver.rpc | |||

| Used By | DFserver.rpc | |||

| Field Delimiter | | | |||

| Record Delimiter | \n | |||

| Comment Delimiter | NA | |||

| Fields/Record | 9+ | |||

| Description |

After first validation, new records are moved from

to the study database files,

All database records are stored in free-field format with a

Data

records have the common fields and characteristics described in

Table 2.5, “ | |||

| Example |

Here is an example of a plate 4 data

record after level 1 validation to database file

1|1|9145/0045001|099|4|1|0123|egb|92/01/01|high blood pressure| Dr. Smith|92/01/10 10:23:23|92/01/10 10:23:23|

The text fields have been entered and record status has been set to

|

Table 2.5. plt###.dat field descriptions

| Field # | Contains | Description |

|---|---|---|

1 (DFSTATUS) | record status | Enumerated value from the list 1=final, 2=incomplete, 3=pending, 4=FINAL, 5=INCOMPLETE, 6=PENDING, 0=missed |

2 (DFVALID) | validation level | Enumerated value from the list 1, 2, 3, 4, 5, 6, 7 |

3 (DFRASTER) | raster name |

the fax image id from which this data record was derived, if there was a

fax image.

The image id is always in the format

YYWW/FFFFPPP or YYWWRFFFFPPP,

where YY is the year (minus the century) in which the image

id was created, WW is the week of the year,

FFFF is a sequential base 30 value, in the range 0001-ZZZZ,

representing the fax arrival order within the week,

and PPP is the page number within the fax

(also see pages).

If the image id contains a slash (/) in the 5th

character position, then the image id is for a fax.

Pre-pending this image id with the value of the

PAGE_DIR variable for the study creates a

unique pathname to the file containing the fax image.

If the image id contains R in the 5th character

position, then the image id is for a raw data entered record, and in fact

there is no image.

|

4 (DFSTUDY) | study number | the DFdiscover study number (must be constant across all records for the

same study). Legal limit is 1-999. |

5 (DFPLATE) | plate number | the plate number as identified in the barcode of the CRF. Within each data file, this field has a constant value. Legal limit is 1-501. |

6 (DFSEQ[a]) | visit/seq number | the visit or sequence number of this occurrence of a plate for a subjects

id. Legal limit is 0-511 if defined in the barcode, and

0-65535 if defined as

the first data field on the page.

|

| 7 | subject ID | the subject identifier. Legal limit is 0-281474976710655.

subject

identifiers are composed of site # + subject ID. This limit applies to the

concatenated value of the two, where the site # legal limit is

0-21460.

|

| 8 to N-3 | data | data fields from the corresponding CRF page |

N-2 (DFSCREEN) | record status | Enumerated value from the list

1=final, 2=incomplete,

3=pending.

This record status mimics the status recorded in the first field except that

there is no distinction between primary and secondary. |

N-1 (DFCREATE) | creation date | the creation date and time stamp in the format

yy/mm/dd hh:mm:ss.

The creation date and time stamp are added to the record the first time it is

validated to a level higher than 0.

It is never modified after initial addition to the record. |

N (DFMODIFY) | last modification date | the last modification date and time stamp in the format

yy/mm/dd hh:mm:ss

The initial value for this field is the same as the creation date.

Each time any data within the record is modified, the modification stamp

is updated.

It is not updated if only the record's validation level has changed. |

[a] Field 6 has this name only if the field is defined to be in the barcode; otherwise, the name is user-defined. | ||

Table 2.6. plt###.dat - missed records

| Usual Name | plt###.dat |

| Type | clear text |

| Created By | DFserver.rpc |

| Used By | DFserver.rpc |

| Field Delimiter | | |

| Record Delimiter | \n |

| Comment Delimiter | NA |

| Fields/Record | 11 |

| Description |

If a CRF is reported missing, and is not expected to ever arrive (e.g. because

the subject missed a visit, or refused a test), it can be registered in the

study database using DFexplore.

These records have a status of |

| Example |

Here is an example of a missed data record 0|7|000/0000000|099|4|1|0123|1|subject was on vacation|92/01/10 10:23:23|92/01/10 10:23:23|

|

Table 2.7. Missed record field descriptions

| Field # | Contains | Description |

|---|---|---|

1 (DFSTATUS) | record status | Enumerated value - always contains a 0

for missed records, which is equivalent to the record status lost

|

2 (DFVALID) | validation level | Enumerated value from the list 1, 2, 3, 4, 5, 6, 7 |

3 (DFRASTER) | image name | always contains 0000/0000000 for missed records.

This is simply a placeholder value as there is no CRF image. |

4 (DFSTUDY) | study number | the DFdiscover study number (must be constant across all records for the same study) |

5 (DFPLATE) | plate number | the plate number |

| 6 | visit/seq number | the visit or sequence number |

| 7 | subject ID | the subject identifier |

| 8 | reason code | Enumerated value - the reason that the CRF was missed, selected from

the following list: 1=Subject missed visit, 2=Exam or test not

performed, 3=Data not available, 4=Subject refused to continue, 5=Subject moved away, 6=Subject lost to

follow-up, 7=Subject died, 8=Terminated -

study illness, 9=Terminated -

other illness, 10=Other reason |

| 9 | reason text | an additional, optional explanation as to why the CRF was missed |

10 (DFCREATE) | creation date | the creation date and time stamp in the format

yy/mm/dd hh:mm:ss |

11 (DFMODIFY) | last modification date | the last modification date and time stamp will always be the same as the creation date and time stamp as missed records are never modified once created (changes, if necessary, are made by deleting the existing missed record and creating another one) |

Table 2.8. DFqc.dat - the Query database

| Usual Name | DFqc.dat |

| Type | clear text |

| Created By | |

| Used By | DFserver.rpc, DFexplore, DFbatch, DF_CTqcs, DF_ICqcs, DF_ICrecords, DF_PTcrfs, DF_PTqcs, DF_QCreports, DF_QCsent, DF_QCstatus, DF_QCupdate |

| Field Delimiter | | |

| Record Delimiter | \n |

| Comment Delimiter | NA |

| Fields/Record | 22 |

| Description |

The query data file, DFqc.dat, is maintained by the study server in the same manner as the CRF data files, DFin.dat and plt*.dat. All query records have exactly the same format, across all DFdiscover studies. Each query record has 22 fields, of which 5 (fields 4-8) are the keys needed to uniquely identify each record. Multiple queries are permitted per field provided their category codes are unique. As for study data files, you should always use DFexport.rpc to export the query database before using it. For this purpose, DFqc.dat has been assigned a DFdiscover reserved plate number of 511.

Table 2.9, “ |

| Example |

An example of a newly added query that is to be included in the next Query Report sent to the originating site (the line break is for presentation purposes only): 1|1|0000/0000000|20|10|999|2100101|6|21|000000|0||1. Sample collection date |11/10/05|1|2|||user1 06/05/27 11:35:57|user1 06/05/27 11:35:57||1|

An example of a query that was sent out in a Query Report and has now been resolved: 5|4|0000/0000000|20|397|2030|1415412|11|14|060424|31|1|A2. Whole Blood 1ml ID # ||1|2|||brian 06/03/23 12:57:20|brian 06/03/23 12:57:20|barry 06/05/11 14:10:04|1|

|

Table 2.9. DFqc.dat field descriptions

| Field # | Contains | Description | |||

|---|---|---|---|---|---|

1 (DFSTATUS) | record status | current status of the query. Legal values are: 0=pending (review), 1=new, 2=in unsent report, 3=resolved NA, 4=resolved irrelevant, 5=resolved corrected, 6=in sent report, 7=pending delete | |||

2 (DFVALID) | validation level | validation level at which the query was created or last modified. The query validation level matches the level of the data record upon export only when the DFexport.rpc -m option is used. | |||

3 (DFRASTER) | raster name | must contain "0000/0000000". Any references to actual raster names will result in the deletion of those rasters if the referencing query is deleted. | |||

4 (DFSTUDY) | study number | the DFdiscover study number. Again, this number is constant across all records for one study. | |||

5 (DFPLATE) | plate number | the plate number of the record that this query is attached to | |||

6 (DFSEQ) | visit/seq number | the visit or sequence number of the record that this query is attached to | |||

7 (DFPID) | subject ID | the subject identification number | |||

8 (DFQCFLD) | query field number | the data entry field to which this query is attached

| |||

9 (DFQCCTR) | site ID | the site ID that the query was sent to or will be sent to. This is determined when the query is created. Also, it is checked, and updated if necessary to account for a subject move, each time DF_QCupdate is executed. | |||

10 (DFQCRPT) | report number | the Query Report that the query was written to. The Query Report number is always in the format yymmdd, for the year, month, and day the report was created. If the query has not yet been written to a report, the value is 0. | |||

11 (DFQCPAGE) | page number | the page number of the Query Report, on which the query appears, or 0 if it has not yet been written to a report. | |||

12 (DFQCREPLY) | reply to query | the reply entered by an DFexplore user in the format name yy/mm/dd hh:mm:ss reply The maximum length of this field is 500 characters. It is blank when a new query is created, or when an old query is reset to new. This field cannot be created or edited in DFexplore by users who do not have adequate permissions. When a new reply is entered, the query status is changed to pending. | |||

13 (DFQCNAME) | name | a description of the data field that is referenced by this query. Although this field can be edited when the quality control report is being added, its default value is taken from the "Description" part of the variable definition entered in the Setup tool. The maximum length of this field is 150 characters, but only the first 30 appear on quality control reports. | |||

14 (DFQCVAL) | value | the value held in the data field when the query was added. If the query is subsequently edited and the value of the data field has changed, this field is updated with the new value. The maximum length of this field is 150 characters. For overdue visit queries, this field contains a julian representation of the date on which the visit was due (expressed as the number of days since Jan 1,1900). | |||

15 (DFQCPROB) | category code | the numeric category code. Legal values are: 1=missing value, 2=illegal value, 3=inconsistent value, 4=illegible value, 5=fax noise, 6=other problem, 21=missing plate, 22=overdue visit, 23=EC missing plate, 30-99=user-defined category code. | |||

16 (DFQCRFAX) | refax code | refax request code. Should the CRF page, which this query references, be re-sent? Legal values are: 1=no (clarification query type), 2=yes (correction query type). | |||

17 (DFQCQRY) | query | any additional text needed to clarify the query to the investigator who will receive the Query Reports. The maximum length of this field is 500 characters. Note: the category code label from field 15 is included automatically and is often all that is needed. | |||

18 (DFQCNOTE) | note | any additional text needed to describe the problem when it is resolved. The maximum length is 500 characters. | |||

19 (DFQCCRT) | creation date | the creator, creation date and time stamp of the query in the format name yy/mm/dd hh:mm:ss The creation date and time stamp are added when the query is first created. | |||

20 (DFQCMDFY) | last modification date | the modifier, last modification date and time stamp in the format name yy/mm/dd hh:mm:ss The initial value for this field is the same as the creation date. Each time the query is modified, the modification stamp is updated. | |||

21 (DFQCRSLV) | resolution date | the resolver, resolution date and time stamp in the format name yy/mm/dd hh:mm:ss Until the query is resolved, this field is blank | |||

22 (DFQCUSE) | usage code | Legal values are: 1=send to site (these queries are formatted into a Query Report and sent to the appropriate study site), 2=internal use only (these queries do not appear in site Query Reports). |

Table 2.10. DFreason.dat - reason for change records

| Usual Name | DFreason.dat |

| Type | clear text |

| Created By | DFserver.rpc |

| Used By | DFserver.rpc |

| Field Delimiter | | |

| Record Delimiter | \n |

| Comment Delimiter | NA |

| Fields/Record | 12 |

| Description |

Data fields may require that a change to the value in the field be supported by a reason for the change. This reason information is recorded in . The contents of the record fields are described in Table 2.11, “Reason for data change record field descriptions”. |

| Example |

Here is an example of a reason data record 1|3|000/0000000|254|4|1|0123|9|phone|information provided by physician over the phone|nathan 03/01/10 10:23:23|nathan 03/01/10 10:23:23 It indicates that field 9 was changed because of the reason "information provided by physician over the phone". |

Table 2.11. Reason for data change record field descriptions

| Field # | Contains | Description |

|---|---|---|

1 (DFSTATUS) | record status | valid status codes include: 1=approved, 2=rejected, 3=pending |

2 (DFVALID) | validation level | Enumerated value from the list 1, 2, 3, 4, 5, 6, 7. This is the record's last validation level when the reason was created or modified. |

3 (DFRASTER) | image name | always contains 0000/0000000 for reason for

data change records.

This is simply a placeholder value as there is no CRF image. |

4 (DFSTUDY) | study number | the DFdiscover study number (must be constant across all records for the same study) |

5 (DFPLATE) | plate number | the plate number |

6 (DFSEQ) | visit/seq number | the visit or sequence number |

7 (DFPID) | subject ID | the subject identifier |

8 (DFRSNFLD) | reason field number | the data entry field number this reason note refers to. This numbering does not correspond directly to the DFdiscover schema numbering, but instead is offset by 3, so for example, the ID field which is always database field number 7 will always report the reason field number as 4. This is identical to the behavior of the field number reported in queries. |

9 (DFRSNCDE) | reason code | an optional coding of the reason for data change.

Although this code field contains textual data, it should be possible to

use it as a categorical variable.

The code will typically come from the first field of the

REASON lookup table, if it is defined.

|

10 (DFRSNTXT) | reason text | required text that provides the reason for the data change. The maximum length of this field is 500 characters. |

11 (DFRSNCRT) | creation date | the creator, creation date and time stamp in the format

name yy/mm/dd hh:mm:ss.

This field is completed when the reason for data change is first

created. |

12 (DFRSNMDF) | last modification date | the modifier, last modification date and time stamp in the format

name yy/mm/dd hh:mm:ss.

The initial value for this field is the same as the creation date. Each time

the reason note is modified, the modification stamp is updated.

|

Table 2.12. DFin.dat - the new records database

| Usual Name | DFin.dat |

| Type | clear text |

| Created By | DFserver.rpc |

| Used By | DFserver.rpc |

| Field Delimiter | | |

| Record Delimiter | \n |

| Comment Delimiter | NA |

| Fields/Record | 5+ |

| Description |

Each initial data record, created by the ICR software when it scans a CRF page, is passed to the study database server which appends it to this file. These are called "new records". All new records have the common fields and attributes identified in

Table 2.13, “ The first 5 fields are added by the process that ICRed the page. The 4th and 5th fields were read from the barcode at the top of each CRF page. Dependant upon the success of the ICR algorithm, subsequent fields in each new record may or may not be filled in. If a fax image is particularly difficult to read, it is possible that only the first five fields will appear in the new record. The first three fields, in addition to being delimited, are also in fixed column positions. The record status begins in the first column and is one column wide. The validation level begins in the third column and is one column wide. The raster name begins in the fifth column and is 12 columns wide. Columns two and four contain the field delimiter. |

| Example |

Here is an example of a new data record from 0|0|9145/0045001|099|4|1|0123||92/01/01||| This record has new record status, has not yet been validated, contains the data from fax image $(PAGE_DIR)/9145/0045001, and belongs to study 99, plate 4, visit 1, and subject ID 123. |

Table 2.13. DFin.dat field descriptions

| Field | Contains | Description |

|---|---|---|

1 (DFSTATUS) | record status | Enumerated value - always contains a 0

for new records, which is equivalent to the record status new

|

2 (DFVALID) | validation level | Enumerated value - always contains a 0

for new records, which indicates that the record has only been validated

by the ICR software |

3 (DFRASTER) | image name | the fax image from which this data record was derived.

The value for this field will always be in the format

yyww/ffffppp.

Prepending this name with the value of the

PAGE_DIR variable for the study creates a

unique pathname to the file containing the fax image |

4 (DFSTUDY) | study number | the DFdiscover study number (must be constant across all records for the same study) |

5 (DFPLATE) | plate number | the plate number as identified in the barcode of the CRF |

Table 2.14. YYMM.jnl - monthly database journal files

| Usual Name | YYMM.jnl |

| Type | clear text |

| Created By | DFserver.rpc |

| Used By | DF_ATfaxes, DF_ATmods, DF_WFcrfs, DF_WFqcs |

| Field Delimiter | | |

| Record Delimiter | \n |

| Comment Delimiter | NA |

| Fields/Record | 11+ |

| Description |

The study database server adds a record to the current study database journal file, each time that a data record is written to the study database, including when:

Separate journal files are kept for each study, and within a

study, a new journal file is created for each month. The naming scheme for

journal files is

The fields within each journal record are defined as described in

Table 2.15, “ |

| Example |

Following is an example of a complete journal record.

The line breaks are for presentation purposes only.

980312|132426|valid1|d|1|1|9807/0047008|254|5|24|99001|ABC|06/07/97| 171|097|172|096|2|055.1|121.5|2||1143|00|*|*|*|1|1|*|1|1| 98/03/12 13:24:26|98/03/12 13:24:26| |

Table 2.15. YYMM.jnl field descriptions

| Field # | Contains | Description |

|---|---|---|

| 1 | date stamp | A date stamp, in YYMMDD format,

identifying when the data record was written to the database |

| 2 | time stamp | a time stamp, in HHMMSS format,

identifying when the data record was

written to the database. Hours are reported in 24-hour notation. |

| 3 | username | the username of the person who wrote the record to the database. This is the login name of the user who modified the record. |

| 4 | record type | Enumerated value - indicates the type of the journal record.

Possible values are:

d for data record,

q for query record,

r for reason record,

s for the beginning of a setup restructuring, and

S for the end of a setup restructuring.

|

| 5-11 | record keys | fields 5 through 11 contain the first 7 fields from the data record. |

| 12+ | record data fields | the remaining data fields (8 to the end of the record) follow. |

Table 2.16. DFaudit.db sqlite audit trail

| Usual Name | DFaudit.db |

| Type | binary |

| Created By | DFserver.rpc, DFauditdb |

| Used By | DFserver.rpc, DFedcservice, DF_SBhistory |

| Field Delimiter | NA |

| Record Delimiter | NA |

| Comment Delimiter | NA |

| Fields/Record | NA |

| Description |

All journal records are stored additionally in a sqlite database. The database contains table DFaudit and indexes. Columns in the DFaudit table match the output from DFaudittrace: CREATE TABLE IF NOT EXISTS dfaudit ( dftype TEXT NOT NULL, -- 1 type: N=new, C=changed field, D=deleted dfdate INTEGER NOT NULL, -- 2 date: yyyymmdd dftime TEXT NOT NULL, -- 3 time: hhmiss dfuser TEXT NOT NULL, -- 4 user dfsubject INTEGER, -- 5 subject dfvisit INTEGER, -- 6 visit dfplate INTEGER NOT NULL, -- 7 plate dffieldid INTEGER, -- 8 unique field id: data(=0), query(>0), reason(<0) dffieldchange INTEGER, -- 9 changed field: data(0, unique id), query(field#), reason(field#) dfstatus INTEGER, -- 10 data, query and reason status dflevel INTEGER, -- 11 validation level dfmaxlevel INTEGER, -- 12 maximum validation level reached dfcategory TEXT, -- 13 missed record code, query category, reason code dfuse TEXT, -- 14 missed record text, query usage, reason text dfoldval TEXT, -- 15 old value dfnewval TEXT, -- 16 new value dffieldnum INTEGER, -- 17 field number dffielddesc TEXT, -- 18 field description dfoldlbl TEXT, -- 19 old coded field label dfnewlbl TEXT, -- 20 new coded field label dfuid INTEGER) -- 21 internal use for database restructure CREATE INDEX IF NOT EXISTS dfidx_date ON dfaudit (dfdate, dfsubject) CREATE INDEX IF NOT EXISTS dfidx_user ON dfaudit (dfuser) CREATE INDEX IF NOT EXISTS dfidx_fuid ON dfaudit (dfuid) CREATE INDEX IF NOT EXISTS dfidx_fid ON dfaudit (dffieldid) CREATE INDEX IF NOT EXISTS dfidx_fnum ON dfaudit (dffieldnum) CREATE INDEX IF NOT EXISTS dfidx_keys ON dfaudit (dfsubject, dfvisit, dfplate) CREATE INDEX IF NOT EXISTS dfidx_vst ON dfaudit (dfvisit) CREATE INDEX IF NOT EXISTS dfidx_plt ON dfaudit (dfplate) CREATE INDEX IF NOT EXISTS dfidx_sta ON dfaudit (dfstatus, dflevel) CREATE INDEX IF NOT EXISTS dfidx_lvl ON dfaudit (dflevel)

DFserver.rpc opens |

Table 2.17. plt###.ndx - per plate index files

| Usual Name | plt###.ndx |

| Type | binary |

| Created By | DFserver.rpc |

| Used By | , DFshowIdx |

| Field Delimiter | NA |

| Record Delimiter | NA |

| Comment Delimiter | NA |

| Fields/Record | NA |

| Description |

Every study data file has a corresponding index file that the study server uses to track the current status and location of each record in the data file. The index entry for a particular data record includes the value of the key fields id, plate, and visit/sequence number, the record status, the validation level, the offset of the beginning of the record into the data file, and the length of the data record. When searching for a data record by keys, it is much more efficient for the database server to search the index file for matching keys and then use the offset and length to extract the data record from the data file. Each time an existing data record is modified or a new record is added, a new entry is made at the end of the index file for the new, modified copy of that record, and the status of the old index entry (if there was one) is changed to indicate that it has been superseded by a new entry.

Index and data files are sorted (on id and visit/sequence number) by the study

database server, each time the server exits.

Before sorting, an index file has

The first 32 bytes of an index file are header information, consisting of four

4-byte numbers that identify attributes of the file as a whole,

as described in Table 2.18, “

The 32 bytes of header information are followed by the actual index entries.

Each index entry is 32 bytes in size and is described in

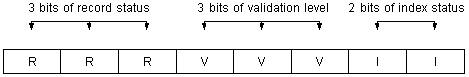

Table 2.19, “ Each index entry contains 8 bytes for the subject ID, 2 bytes for the visit number, 2 bytes for the plate number, 4 bytes for the offset into the data file, 2 bytes for the record length, a status byte and 13 bytes of padding. The status byte encodes three pieces of information: the record status (equivalent to the numeric value of the first byte of the data record), the record validation level (equivalent to the numeric value of the third byte of the data record), and the status of the index entry. This is encoded as illustrated in Figure 2.1, “Encoding for bits within the status byte”. The record status contains the same values as those allowed in the record status field of the data record, namely 0 through 7 (binary 111). Similarly, the validation level will take on the same values as those allowed in the validation level of the data record, again 0 through 7. The index status contains the value 2 if this is a new index entry, 1 if the index entry has been superseded by a newer one, and 0 otherwise. The size of all index files should always be a multiple of 32 bytes. |

This directory contains the edit check source files.

Table 2.20. DFedits - edit checks

| Usual Name | DFedits |

| Type | clear text |

| Created By | DFsetup |

| Used By | DFexplore |

| Field Delimiter | NA |

| Record Delimiter | NA |

| Comment Delimiter | # |

| Fields/Record | NA |

| Description |

This file contains the edit checks that are defined for this study. The edit check language is fully described in Edit checks. |

| Example |

edit SetInit()

{

if ( dfblank( init ) && !dfblank( init[,0,1] ) )

init = init[,0,1];

}

edit AgeOk()

{

number age;

if ( !dfblank( p001v03 ) && !dfblank( p001v04 ) )

{

age = ( p001v03 - p001v04 ) / 365.25;

...

|

This directory contains the study configuration files, those files that make this study unique from every other DFdiscover study.

Table 2.21. DFcenters - sites database

| Usual Name | DFcenters | ||||||

| Type | clear text | ||||||

| Created By | DFsetup | ||||||

| Used By | all study tools and the standard reports including: DF_CTqcs, DF_CTvisits, DF_PTcrfs, DF_QCfax, DF_QCfaxlog, DF_QCreports, DF_QCupdate, DF_SScenters, DF_XXtime, and DF_qcsbyfield | ||||||

| Field Delimiter | | | ||||||

| Record Delimiter | \n | ||||||

| Comment Delimiter | NA | ||||||

| Fields/Record | 11+ | ||||||

| Description |

Each DFdiscover study has a sites database that records where each participating site is located, who the contact person is, and what subject ID number ranges are covered by each site ID.

Each site typically corresponds to a different clinical site, but this is

not required. If necessary, a single clinical site may be defined as 2 or more

sites, each corresponding to a different participating clinical investigator

at that site. The sites database consists of one record per site ID. The field

definitions are described in Table 2.22, “Field definitions for

Each site ID must be a number in the range

Each comma inserts a one-second pause in the dialing sequence. This can be helpful when leaving a local PBX or waiting to dial an extension.

A valid email address may also be supplied in place of or together with a

primary fax number. The email address must be specified

using the notation

If more than one email and/or fax number is specified, each must be separated by a single space. Comma separators are not permitted. Subject ID ranges are specified in fields 11 through the end of the record. There is no limit to the number of range fields that can be given. Each range field contains a minimum subject ID and maximum subject ID separated by exactly one space character. For example, |101 199| indicates that subject IDs 101 through 199 inclusive are to be included for the site. Individual subject IDs that are disjoint from any range are indicated by setting both the minimum and maximum ids to the actual subject ID. For example, |244 244|301 399|401 420| includes subject IDs 244, 301 through 399 inclusive, and 401 through 420 inclusive.

In the event that subject IDs are incorrectly entered in data

records, there should always be a 'catch-all' site listed that receives all

subject IDs that do not fall into any of the other subject ID ranges.

This site is indicated by the phrase

| ||||||

| Example |

A single sites database record for a site that is responsible for

subject IDs 1101 through 1149, and 1151 through 1199 inclusive.

011|Lisa Ondrejcek|DFnet|140 Lakeside Avenue, Suite 310, Seattle, Washington, 98122|1-206-322-5932|country:USA;enroll:100|1-206-322-5931|Lisa Ondrejcek|1-206-322-5931||1101 1149|1151 1199 |

Table 2.22. Field definitions for DFcenters

| Field # | Description | Required | Maximum Size |

|---|---|---|---|

| 1 | site ID | yes | 5 digits |

| 2 | Contact | yes | 30 characters |

| 3 | Name | yes | 40 characters |

| 4 | Address | no | 80 characters |

| 5 | Fax | no | 4096 characters |

| 6 | Attributes including Start Date, End Date, Enroll Target, Protocol Effective Date (x5),

Protocol Version (x5)

This field contains zero of more semi-colon ( | no | 4096 characters |

| 7 | Telephone (site) | no | 30 characters |

| 8 | Investigator | no | 30 characters |

| 9 | Telephone (investigator) | no | 30 characters |

| 10 | Reply to email address | no | 80 characters |

| 11+ | Subject ID ranges | yes | 30 digits,1 space |

Table 2.23. DFccycle_map - conditional cycle map

| Usual Name | DFccycle_map |

| Type | clear text |

| Created By | DFsetup |

| Used By | DF_QCupdate |

| Field Delimiter | | |

| Record Delimiter | \n |

| Comment Delimiter | # |

| Fields/Record | 2+ |

| Description |

This table describes the conditional cycle map file structure and provides an example. It does not describe all of the syntax and rules related to this feature. Usage instructions for all 4 conditional maps is fully described in Study Setup User Guide, Conditional Maps. The file contains one or more specifications, each consisting of a condition definition followed by one or more actions to be applied if the condition is met. Entries in the file have the general appearance of: IF|Visit List|Plate|Field|Value AND|Visit List|Plate|Field|Value [+-~]|List of conditional cycles

Each condition may be followed by one or more action statements. Each of these statements begins with: '+' to indicate that the cycles are required, '-' to indicate that the cycles are unexpected, or '~' to indicate that the cycles are optional, when the condition is met. There is no limit to the number of condition/action entries that may be included but the order in which the conditions appear may be important, because in the event of a conflict, the action specified by the last entry, applicable to each cycle, is the action that will be applied. This point is illustrated in the following example. |

| Example |

IF|0|1|22|6 +|2,5,8 -|3,6,9 ~|4,7,10 IF|0|1|22|5 AND|0|9|13|>0 AND|0|9|36|!1 +|11 IF|1|3|9|~^A -|11 This example, consists of 3 conditional specifications. They are applied in the order in which they are defined. The first specification indicates that, if field 22 on plate 1 at visit 0 equals 6, then cycles 2, 5 and 8 are required; cycles 3, 6 and 9 are not expected; and cycles 4, 7 and 10 are optional. The second specification indicates that, if field 22 on plate 1 at visit 0 equals 5, and field 13 on plate 9 at visit 0 is greater than zero, and field 36 on plate 9 at visit 0 is not equal to 1, then cycle 11 is required. The third specification indicates that, if field 9 on plate 3 at visit 1 begins with the capital letter "A", then cycle 11 is not expected. If both conditions 2 and 3 are met cycle 11 will be considered unexpected because, when a conflict occurs, the last condition wins. |

Table 2.24. DFcvisit_map - conditional visit map

| Usual Name | DFcvisit_map |

| Type | clear text |

| Created By | DFsetup |

| Used By | DF_QCupdate |

| Field Delimiter | | |

| Record Delimiter | \n |

| Comment Delimiter | # |

| Fields/Record | 2+ |

| Description |

This table describes the conditional visit map file structure and provides an example. It does not describe all of the syntax and rules related to this feature. Usage instructions for all 4 conditional maps is fully described in Study Setup User Guide, Conditional Maps. The file contains one or more specifications, each consisting of a condition definition followed by one or more actions to be applied if the condition is met. Entries in the file have the general appearance of: IF|Visit List|Plate|Field|Value AND|Visit List|Plate|Field|Value [+-~]|List of conditional visits

Each condition may be followed by one or more action statements. Each of these statements begins with: '+' to indicate that the visits are required, '-' to indicate that the visits are unexpected, or '~' to indicate that the visits are optional, when the condition is met. There is no limit to the number of condition/action entries that may be included but the order in which the conditions appear may be important, because in the event of a conflict, the action specified by the last entry, is the action that will be applied. This point is illustrated in the following example. |

| Example |

IF|0|1|22|6 +|10-19 -|20-29 ~|30 IF|0|1|22|5 AND|0|9|13|>0 AND|0|9|36|!1 +|40 IF|1|3|9|~HIV -|40 This example, consists of 3 conditional specifications. They are applied in the order in which they are defined. The first specification indicates that, if field 22 on plate 1 at visit 0 equals 6, then visits 10 to 19 are required, visits 20 to 29 are unexpected, and visit 30 is optional. The second specification indicates that, if field 22 on plate 1 at visit 0 equals 5, and field 13 on plate 9 at visit 0 is greater than zero, and field 36 on plate 9 at visit 0 is not equal to 1, then visit 40 is required. The third specification indicates that, if field 9 on plate 3 at visit 1 contains the literal string "HIV", then visit 40 is not expected. If both conditions 2 and 3 are met, visit 40 will be considered unexpected because, when a conflict occurs, the last condition wins. |

Table 2.25. DFcplate_map - conditional plate map

| Usual Name | DFcplate_map |

| Type | clear text |

| Created By | DFsetup |

| Used By | DF_QCupdate |

| Field Delimiter | | |

| Record Delimiter | \n |

| Comment Delimiter | # |

| Fields/Record | 2+ |

| Description |

This table describes the conditional plate map file structure and provides an example. It does not describe all of the syntax and rules related to this feature. Usage instructions for all 4 conditional maps is fully described in Study Setup User Guide, Conditional Maps. The file contains one or more specifications, each consisting of a condition definition followed by one or more actions to be applied if the condition is met. Entries in the file have the general appearance of: IF|Visit List|Plate|Field|Value AND|Visit List|Plate|Field|Value [+-~]Visit List|List of conditional plates

Each condition may be followed by one or more action statements. Each of these statements begins with: '+' to indicate that the plates are required, '-' to indicate that the plates are unexpected, or '~' to indicate that the plates are optional, at the specified visits, when the condition is met. There is no limit to the number of condition/action entries that may be included but the order in which the conditions appear may be important, because in the event of a conflict, the action specified by the last entry, applicable to each plate, is the action that will be applied. This point is illustrated in the following example. |

| Example |

IF|0|1|22|6 +10,20|50,51 -10,20|40,41 ~10,20|15 IF|0|1|22|5 AND|0|9|13|>0 AND|0|9|36|!1 +91-95|16 IF|1|3|9|yes -91|16 This example, consists of 3 conditional specifications. They are applied in the order in which they are defined. The first specification indicates that, if field 22 on plate 1 at visit 0 equals 6, then at visits 10 and 20: plates 50 and 51 are required, plates 40 and 41 are not expected, and plate 15 is optional. The second specification indicates that, if field 22 on plate 1 at visit 0 equals 5, and field 13 on plate 9 at visit 0 is greater than zero, and field 36 on plate 9 at visit 0 is not equal to 1, then at visits 91-95 plate 16 is required. The third specification indicates that, if field 9 on plate 3 at visit 1 contains exactly the string "yes", and nothing more, then plate 16 is not expected at visit 91. If both conditions 2 and 3 are met plate 16 will be considered unexpected at visit 91, but required at visits 92-95, because, when a conflict occurs, the last condition wins. |

Table 2.26. DFcterm_map - conditional termination map

| Usual Name | DFcterm_map |

| Type | clear text |

| Created By | DFsetup |

| Used By | DF_QCupdate |

| Field Delimiter | | |

| Record Delimiter | \n |

| Comment Delimiter | # |

| Fields/Record | 1+ |

| Description |

This table describes the conditional termination map file structure and provides an example. It does not describe all of the syntax and rules related to this feature. Usage instructions for all 4 conditional maps are fully specified in Study Setup User Guide, Conditional Maps. The file contains one or more specifications, each consisting of a condition definition followed by one action to be applied if the condition is met. Entries in the file have the general appearance of: IF|Visit List|Plate|Field|Value AND|Visit List|Plate|Field|Value A or E

Each condition is followed by either the letter 'A' (abort all follow-up), or

'E' (early termination of the current cycle).

The termination date is defined as the visit date of the visit that triggered the condition,

specifically the visit specified in the |

| Example |

IF|0|1|22|6 A IF|6|1|22|5 AND|6|9|13|>0 AND|6|9|36|!1 E This example, consists of 2 conditional specifications. The first specification indicates that, if field 22 on plate 1 at visit 0 equals 6, then all follow-up terminates as of the visit date for visit 0. Visits scheduled to occur before this date are still expected, but visits scheduled following this date are not. The second specification indicates that, if field 22 on plate 1 at visit 6 equals 5, and field 13 on plate 9 at visit 6 is greater than zero, and field 36 on plate 9 at visit 6 is not equal to 1, then the current cycle terminates, i.e. the cycle in which visit 6 is defined; with the termination date being the visit date of visit 6. Any visits in this cycle (or in previous cycles) that were scheduled to occur before the termination date are still expected, but visit within this cycle scheduled following this date are not. On termination of a cycle, subject scheduling proceeds to the next cycle in the visit map, if there is one. |

Table 2.27. DFCRFType_map - CRF type map

| Usual Name | DFCRFType_map |

| Type | clear text |

| Created By | DFsetup |

| Used By | DFbatch, DFprintdb, DFimport.rpc, DFexport.rpc, DFcmpSchema DFcmpSchema |

| Field Delimiter | | |

| Record Delimiter | \n |

| Comment Delimiter | NA |

| Fields/Record | 2 |

| Description |